Why Your Code Reviews Are Stuck (And the 5 Surprising Ways AI Fixes the Flow)

Synergies4 AI-Assisted Code Review Flow

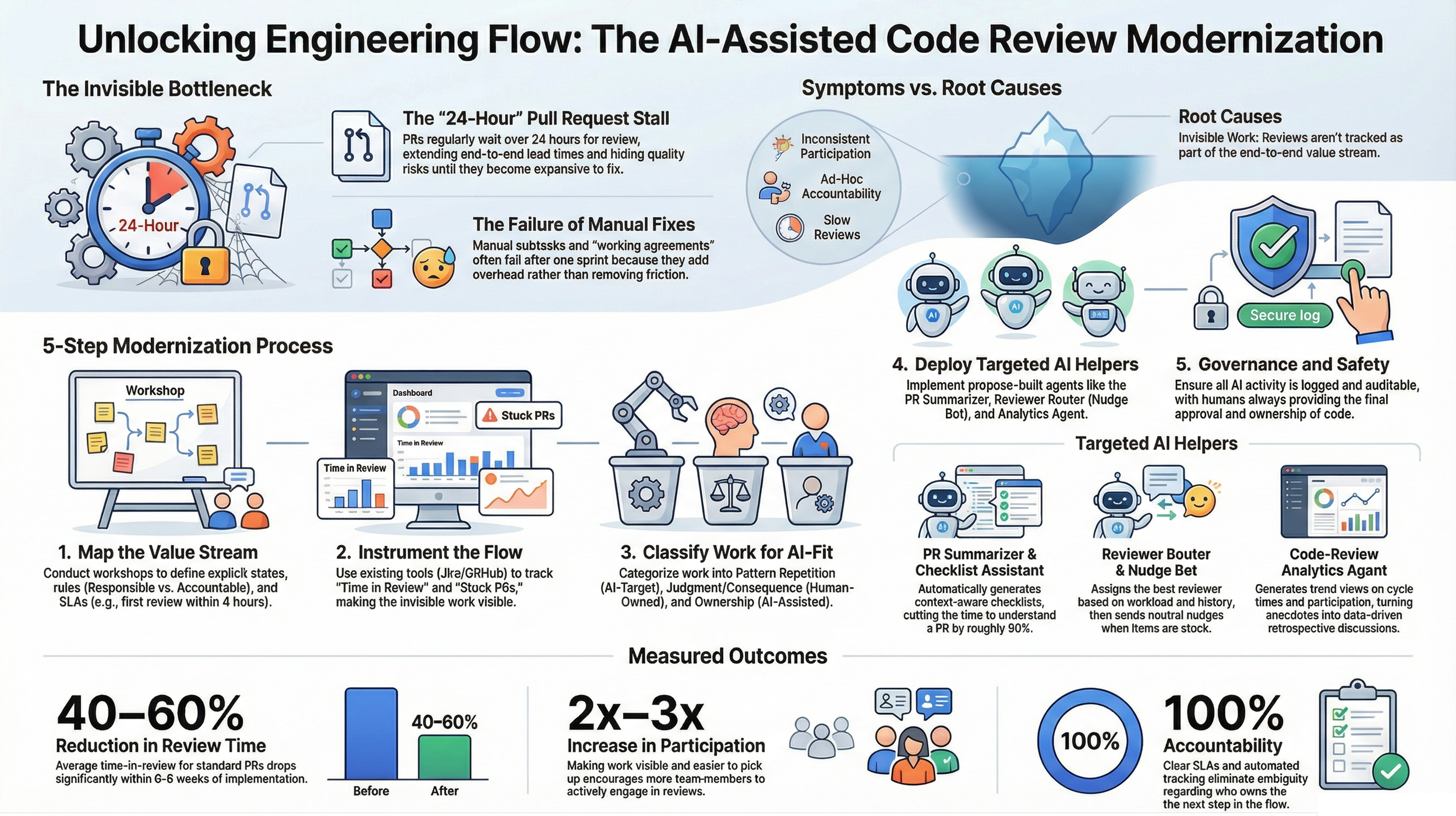

Every engineering leader knows the frustration of the "stalled" Pull Request (PR). A developer completes a feature, submits the code, and then the work sits in limbo for 24, 48, or even 72 hours. This stagnation erodes team confidence and extends lead times from days to weeks.

Common attempts to fix this usually involve manual "nagging" or administrative overhead. Scrum Masters often resort to creating Jira subtasks for reviews, sending manual Slack pings, or pleading for participation during Daily Stand-ups. These tactics fail because they treat human memory as the solution rather than addressing the systemic breakdown in the delivery pipeline.

1. It’s a Flow Problem, Not a Maturity Problem

Many organizations view slow reviews as a lack of discipline, often prescribing "maturity lectures" to developers. This perspective is a remnant of the "Project" mindset, where work is viewed as a series of disconnected tasks rather than a continuous "Product" flow. Relying on goodwill and social pressure is a recipe for inconsistency and burnout.

Synergies4 argues that the bottleneck is a failure of systems engineering, not individual performance. To achieve a true "Project-to-Product" transition, code review must be treated as a first-class flow stage with system-level visibility rather than a side activity.

"This is not a Scrum Master execution problem and not a maturity lecture problem, it is a flow engineering problem that needs system level support."

2. Making "Invisible Work" Visible with Metrics

Code review is often "invisible work" because it lacks an explicit, end-to-end value stream mapping. Without instrumentation, review tasks are easily de-prioritized in favor of new feature development. We transform these anecdotes into objective data by wiring metrics directly into GitHub, Bitbucket, GitLab, or Azure DevOps.

By moving away from opinions and toward evidence, teams can identify exactly where work is stalling. The following four metrics are essential for establishing a baseline of flow:

• Time in Review: Average and 90th percentile time spent in the review stage to identify worst-case scenarios.

• Stuck PRs: A count of PRs waiting for more than 24 hours per sprint.

• Quality Impact: A direct comparison of defect rates between peer-reviewed and non-peer-reviewed code.

• Participation: Tracking load distribution to ensure the review burden is shared across the team.

3. The Three-Tier Work Classification

A "one-size-fits-all" approach to AI implementation usually breeds skepticism among senior engineers. To build trust, we use a "Safety by Design" framework that classifies work by its suitability for AI intervention. This ensures that AI handles the drudgery while humans retain ownership of high-consequence logic.

• Type 1 (Pattern Repetition): High AI targets. This includes linting, style enforcement, coverage hints, and change-log summaries.

• Type 2 (Judgment/Consequence): Human-owned. Security-sensitive code, architectural changes, and complex logic require human accountability.

• Type 3 (Ownership/Compounding): AI-assisted, human-led. This covers process improvements and long-term refactoring where AI supports human strategy.

4. AI as the Neutral "Nudge" Agent

The greatest barrier to fast reviews is "activation energy"—the mental effort required to switch contexts and understand a teammate's code. AI helpers like the PR Summarizer cut this "time to understand" in half by generating context-aware checklists. This lowers the barrier to entry for every reviewer on the team.

Furthermore, a Reviewer Router & Nudge Bot replaces the Scrum Master as the "enforcer." By assigning a Directly Responsible Individual (DRI) and tracking pre-agreed SLAs—such as a first review within 4 hours and completion within 24 hours—the AI surfaces accountability neutrally.

"Use AI to lower the activation energy of reviewing so people react instead of initiate."

5. The "Micro-Pilot" Path to 60% Faster Reviews

Modernizing your flow doesn't require a months-long "AI transformation" initiative. We recommend a 4-8 week "Micro-Pilot" that begins with a policy agreement rather than a tool deployment. This starts with a short policy sheet that defines WIP limits and explicit SLAs that every engineer can absorb in minutes.

Once the policy is set, we engineer targeted AI helpers into your existing tools. This pragmatic approach typically yields a 40–60% reduction in review time and a 2–3x increase in participation. It proves the value of AI in a controlled environment before scaling the pattern to adjacent teams.

Conclusion: From Bottleneck to AI-Factory

Solving the code review bottleneck is the first step in building a broader "AI-Factory." These same patterns of neutral nudges and automated metrics can eventually be cloned into deployment checks, documentation, and status reporting. Leadership gains a live AI Adoption KPI Dashboard to monitor usage, value, and risk signals across the entire organization.

The goal is a shift from manual nagging to a high-speed, transparent, and AI-assisted flow. It transforms code review from a frustrating hurdle into a measurable competitive advantage.

As you evaluate your current delivery pipeline, ask the fundamental Synergies4 question: "If your AI agent had to 'earn its seat' at your table today based on measurable hours saved, would it still have a job tomorrow?"